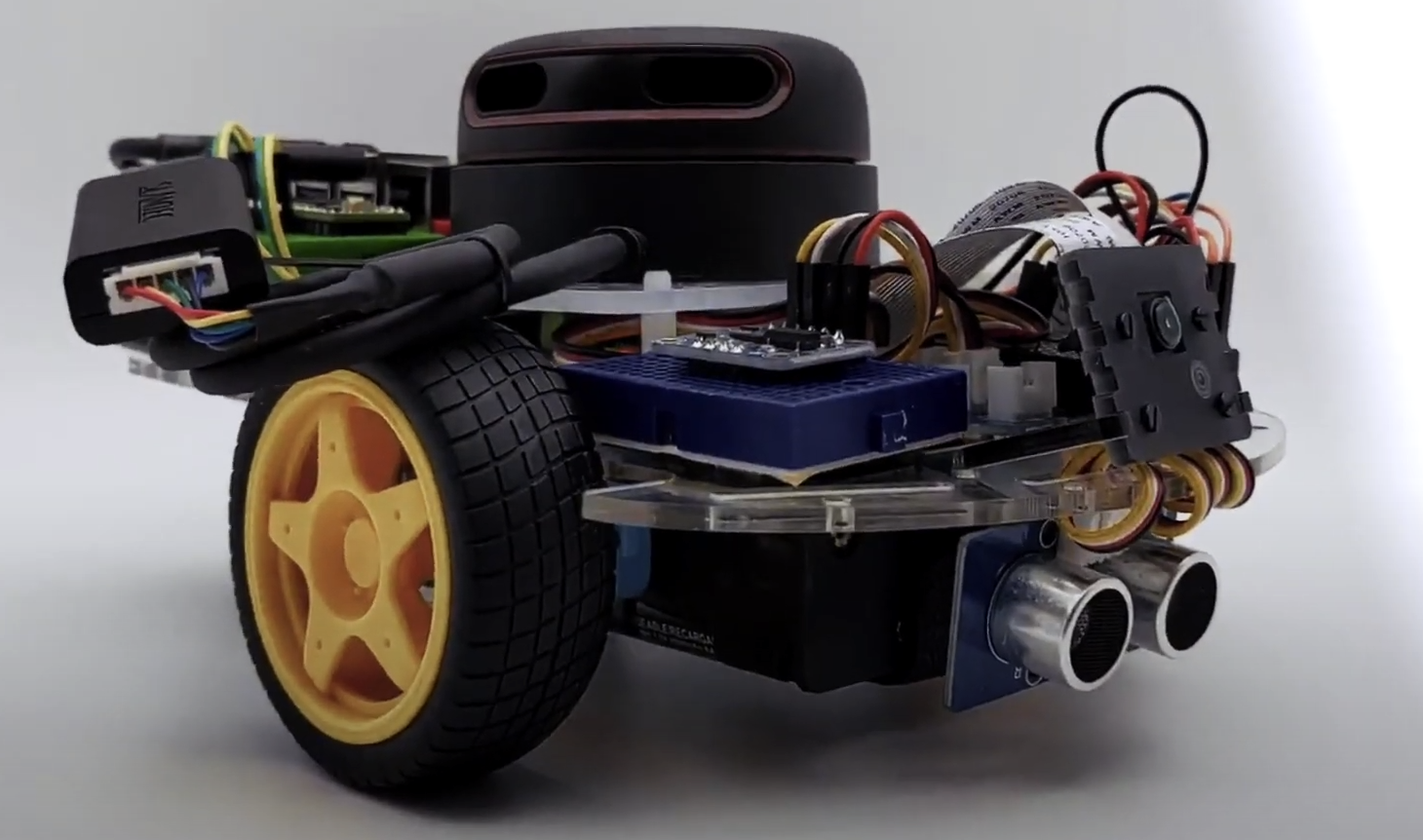

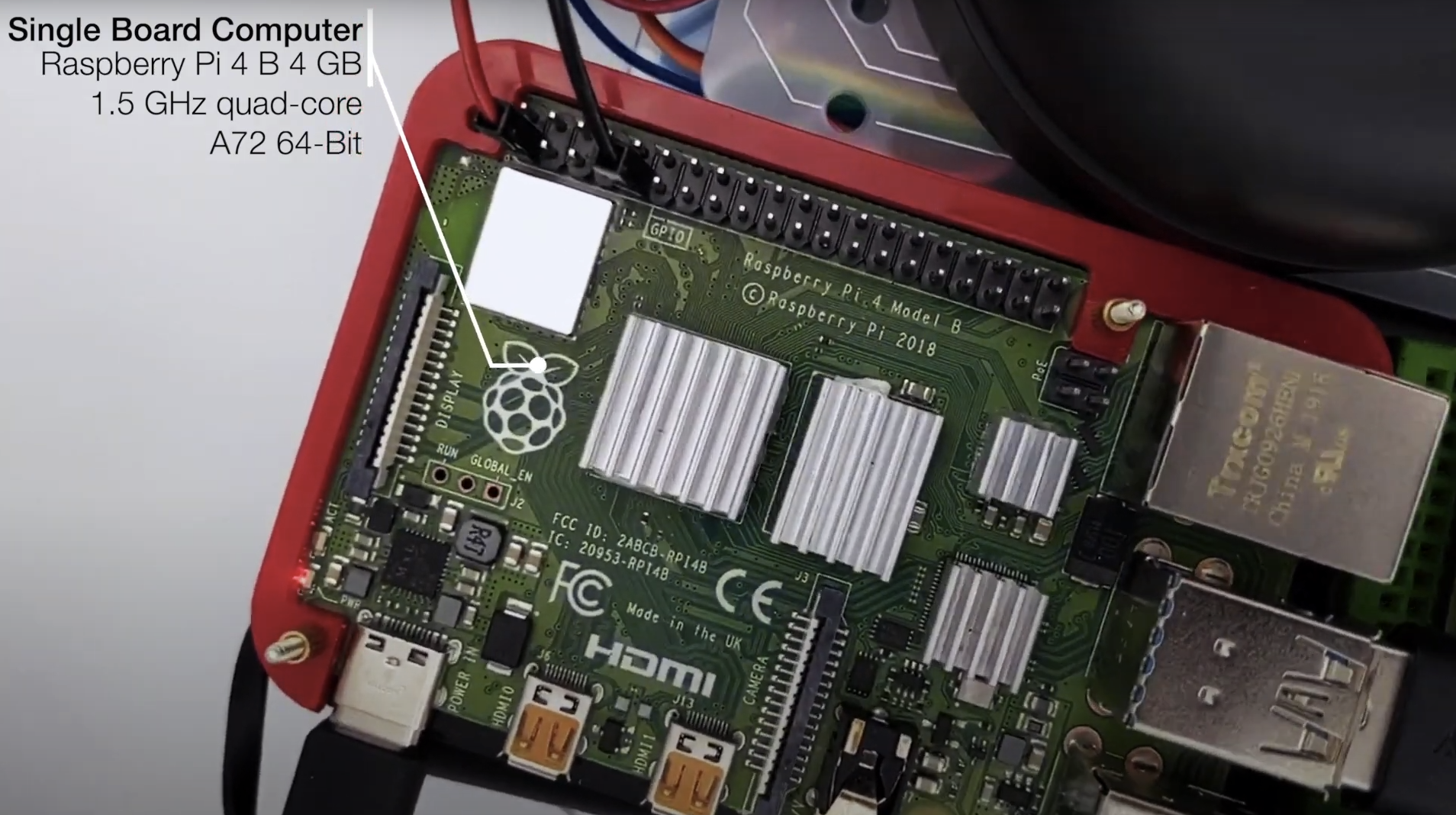

DiffBot is an autonomous differential drive robot with two wheels. Its main processing unit is a Raspberry Pi 4 B running Ubuntu Mate 20.04 and the ROS 1 (ROS Noetic) middleware. This respository contains ROS driver packages, ROS Control Hardware Interface for the real robot and configurations for simulating DiffBot. The formatted documentation can be found at: https://ros-mobile-robots.com.

| DiffBot | Lidar SLAMTEC RPLidar A2 |

|---|---|

|

|

If you are looking for a 3D printable modular base, see the remo_description repository. You can use it directly with the software of this diffbot repository.

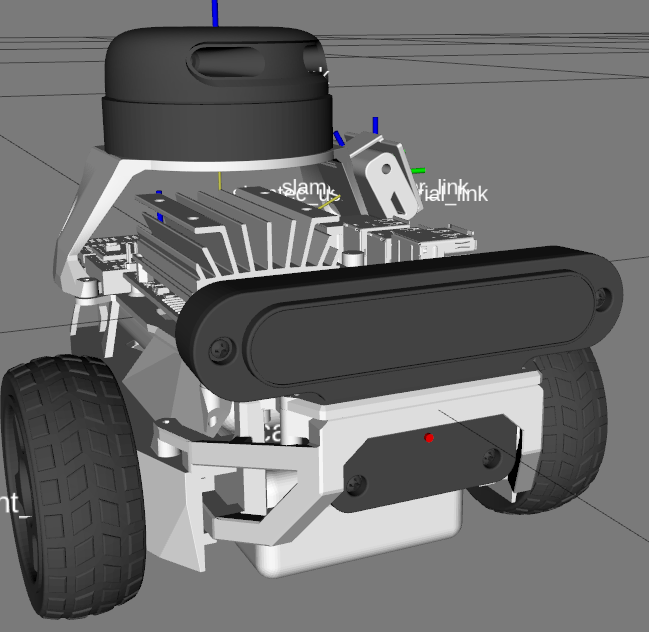

| Remo | Gazebo Simulation | RViz |

|---|---|---|

|

|

|

It provides mounts for different camera modules, such as Raspi Cam v2, OAK-1, OAK-D and you can even design your own if you like. There is also support for different single board computers (Raspberry Pi and Nvidia Jetson Nano) through two changable decks. You are agin free to create your own.

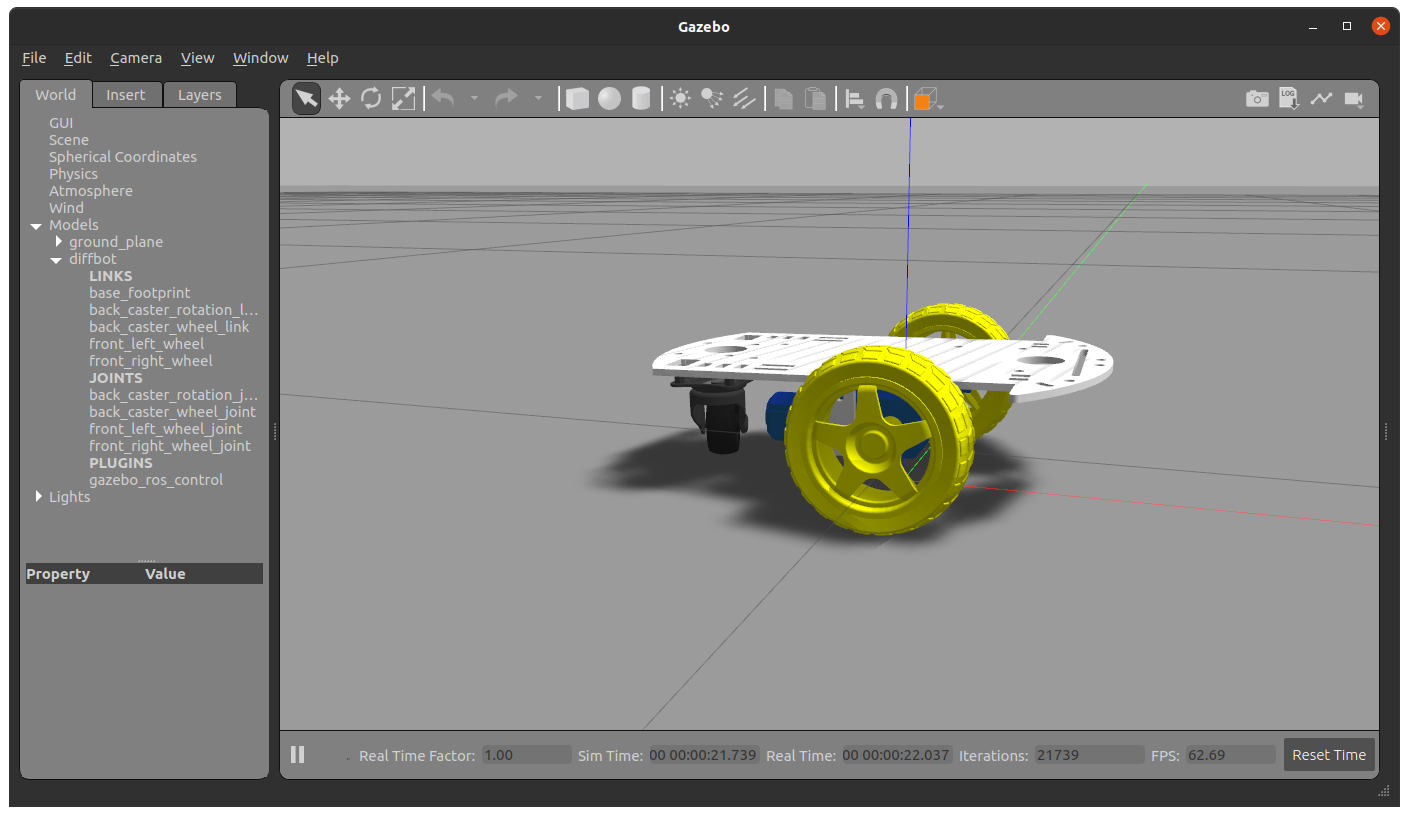

| Real robot | Gazebo Simulation |

|---|---|

|

|

diffbot_base: ROS Control hardware interface includingcontroller_managercontrol loop for the real robotdiffbot_bringup: Launch files to bring up the hardware drivers (camera, lidar, imu, ultrasonic, ...) for the real DiffBot robotdiffbot_control: Configurations for thediff_drive_controllerof ROS Control used in Gazebo simulation and the real robotdiffbot_description: URDF description of DiffBot including its sensorsdiffbot_gazebo: Simulation specific launch and configuration files for DiffBotdiffbot_msgs: Message definitions specific to DiffBot, for example the message for encoder data.diffbot_navigation: Navigation based onmove_baselaunch and configuration filesdiffbot_slam: Simultaneous localization and mapping using different implementations to create a map of the environment

The packages are written for and tested with ROS 1 Noetic on Ubuntu 20.04 Focal Fossa. For the real robot Ubuntu Mate 20.04 for arm64 is installed on the Raspberry Pi 4 B with 4GB. The communication between the mobile robot and the work pc is done by configuring the ROS Network, see also the documentation.

The required Ubuntu packages are listed in the documentation. Other ROS catkin packages such as rplidar_ros need to be cloned into the catkin workspace. It is planned to use vcstool in the future to automate the dependency installtions.

To build the packages in this repository, clone it in the src folder of your ROS Noetic catkin workspace:

catkin_ws/src$ git clone https://github.com/fjp/diffbot.gitAfter installing the required dependencies build the catkin workspace, either with catkin_make:

catkin_ws$ catkin_makeor using catkin-tools:

catkin_ws$ catkin buildFinally source the newly built packages with the devel/setup.* script, depending on your used shell:

# For bash

catkin_ws$ source devel/setup.bash

# For zsh

catkin_ws$ source devel/setup.zshThe following sections describe how to run the robot simulation and how to make use of the real hardware using the available package launch files.

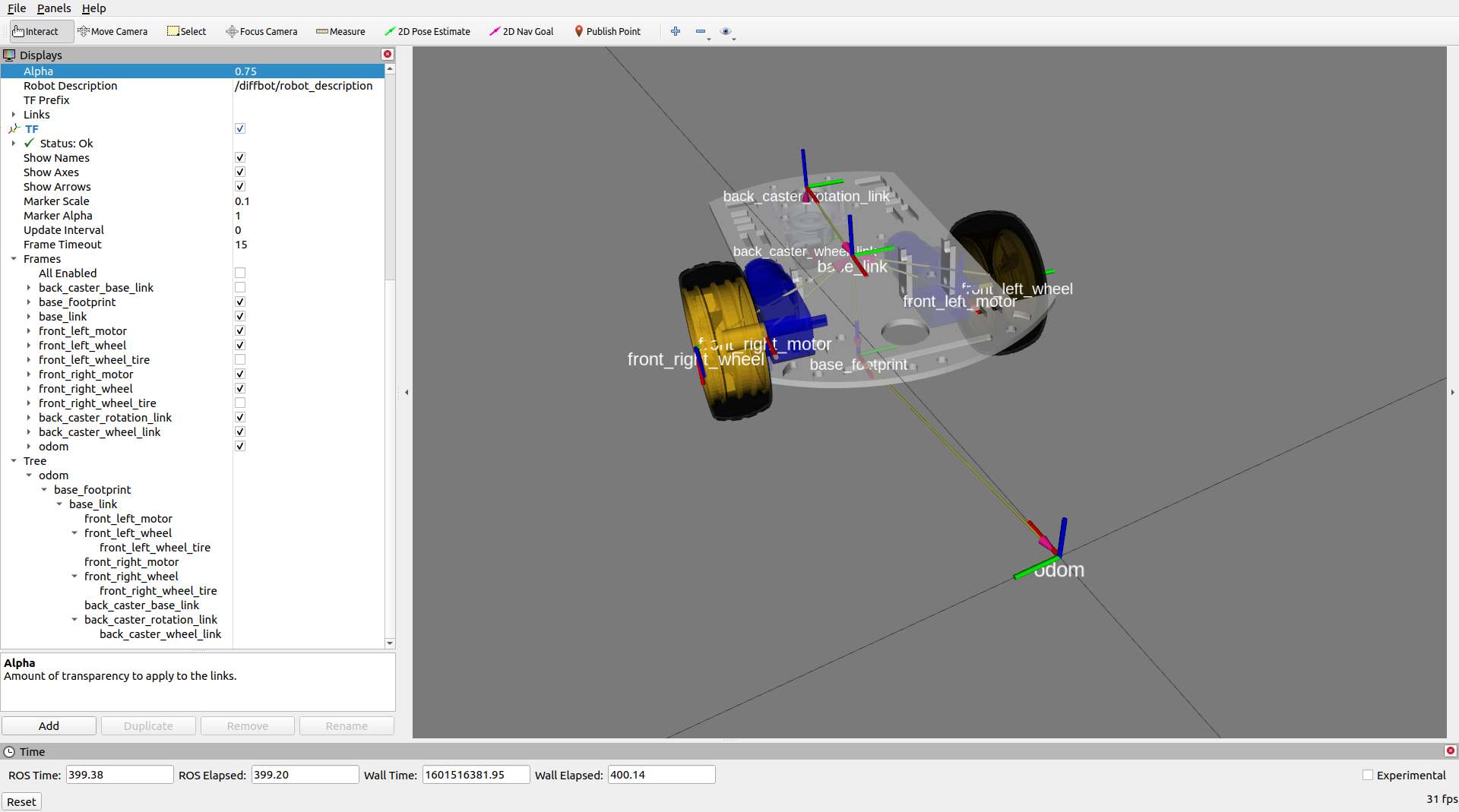

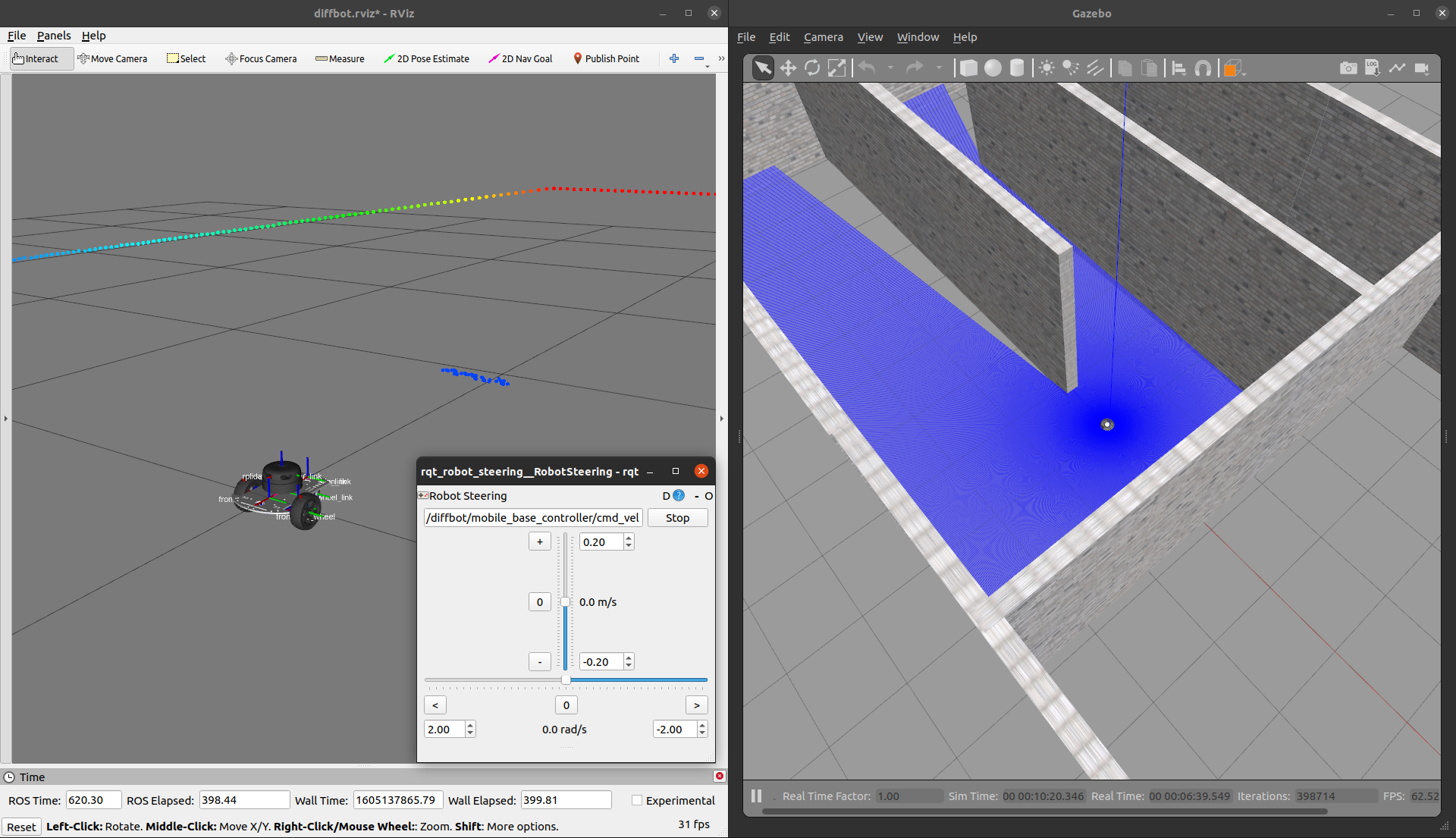

Control the robot inside Gazebo and view what it sees in RViz using the following launch file:

roslaunch diffbot_control diffbot.launch world_name:='$(find diffbot_gazebo)/worlds/corridor.world'To run the turtlebot3_world

make sure to download it to your ~/.gazebo/models/ folder, because the turtlebot3_world.world file references the turtlebot3_world model.

corridor.world |

turtlebot3_world.world |

|---|---|

|

|

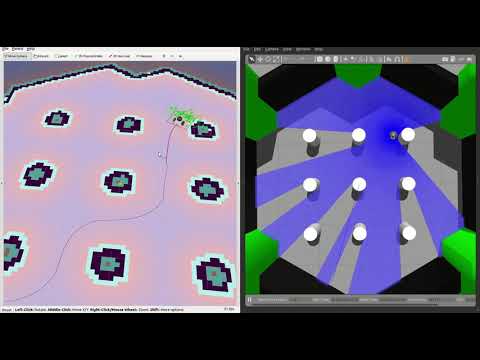

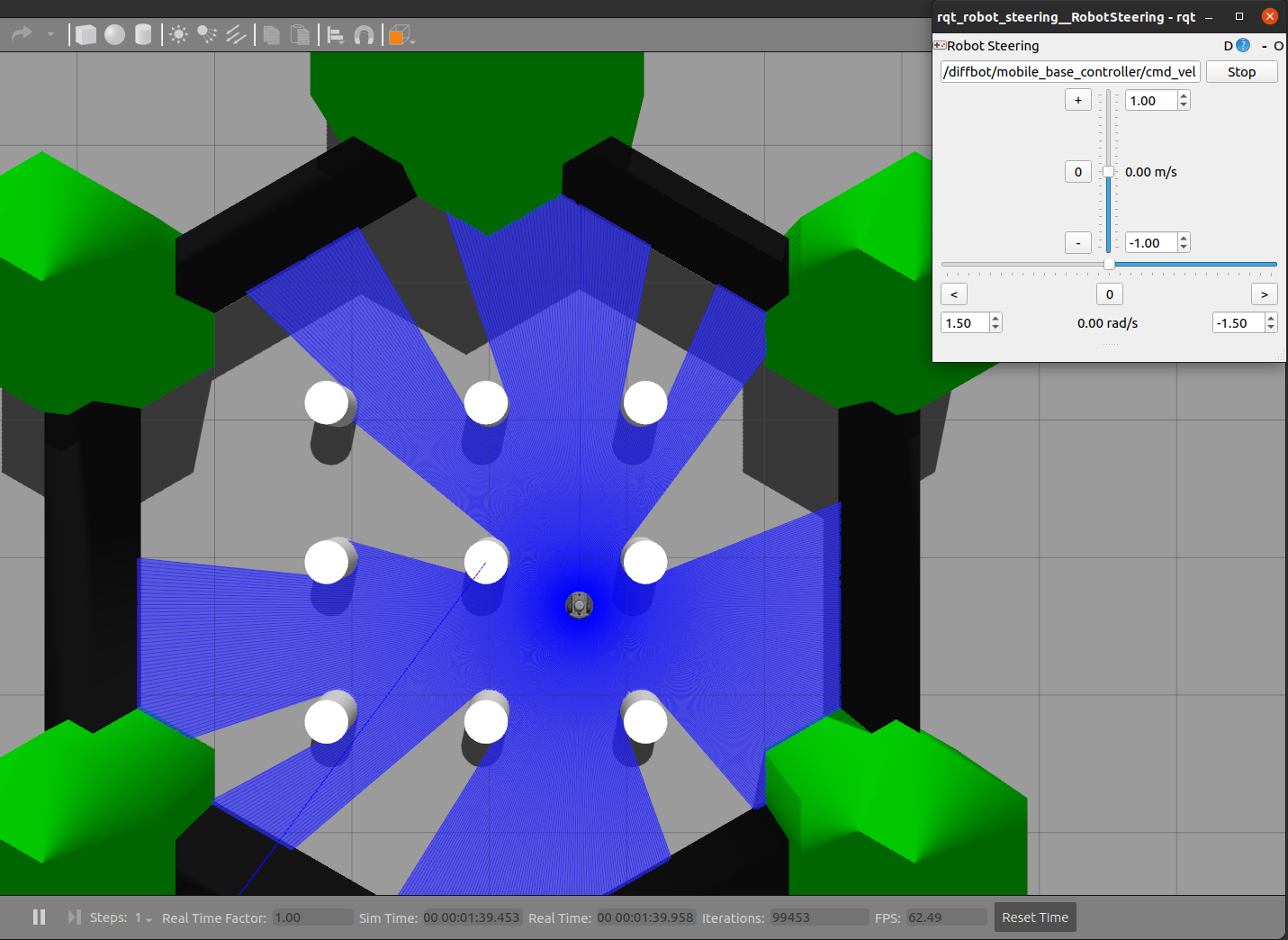

To navigate the robot in the simulation run this command:

roslaunch diffbot_navigation diffbot.launch world_name:='$(find diffbot_gazebo)/worlds/turtlebot3_world.world'Navigate the robot in a known map from the running map_server using the 2D Nav Goal in RViz.

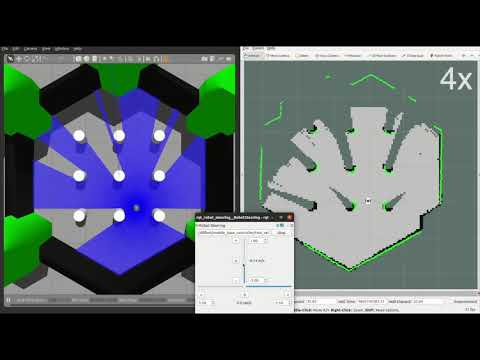

To map a new simulated environment using slam gmapping, first run

roslaunch diffbot_gazebo diffbot.launch world_name:='$(find diffbot_gazebo)/worlds/turtlebot3_world.world'and in a second terminal execute

roslaunch diffbot_slam diffbot_slam.launch slam_method:=gmappingThen explore the world with the teleop_twist_keyboard or with the already launched rqt_robot_steering GUI plugin:

roslaunch diffbot_control diffbot.launchView just the diffbot_description in RViz.

roslaunch diffbot_description view_diffbot.launchThe following video shows how to map a new environment and navigate in it

First, brinup the robot hardware including its laser with the following launch file in the diffbot_bringup package.

Make sure to run this on the real robot (e.g. connect to it via ssh):

roslaunch diffbot_bringup diffbot_bringup_with_laser.launch

then, in a new terminal on your remote/work pc (not the single board computer) run the slam gmapping with the same command as in the simulation:

roslaunch diffbot_slam diffbot_slam.launch slam_method:=gmapping

As you can see in the video, this should open up RViz and the rqt_robot_steering plugin.

Next, steer the robot around manually and save the map with the following command when you are done:

rosrun map_server map_saver -f office

Finally it is possible to use the created map for navigation, after running the following launch files:

On the single board computer (e.g. Raspberry Pi) make sure that the following is launched:

roslaunch diffbot_bringup diffbot_bringup_with_laser.launch

Then on the work/remote pc run the diffbot_hw.lauch from the diffbot_navigation package:

roslaunch diffbot_navigation diffbot_hw.lauch

Among other essential navigation and map server nodes, this will also launch an instance of RViz on your work pc where you can use its tools to:

- Localize the robot with the "2D Pose Estimate" tool (green arrow) in RViz

- Use the "2D Nav Goal" tool in RViz (red arrow) to send goals to the robot

Contributions to these tasks are welcome, see also the contribution section below.

- Migrate from ROS 1 to ROS 2

- Add

diffbot_driverpackage for ultrasonic ranger, imu and motor driver node code. - Make use of the imu odometry data to improve the encoder odometry using

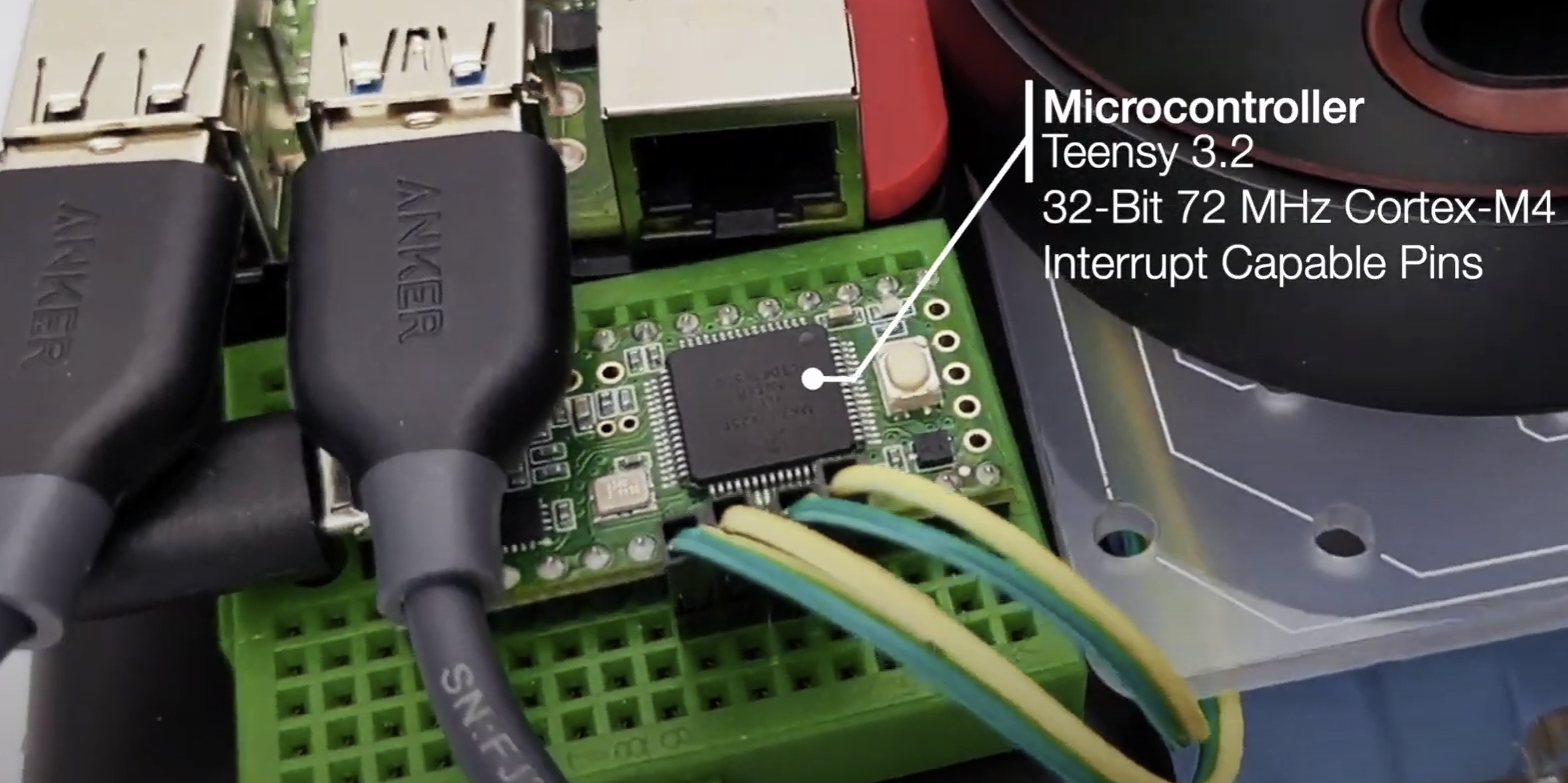

robot_pose_ekf. - The current implementation of the ROS Control

hardware_interface::RobotHWuses a high level PID controller. This is working but also test a low level PID on the Teensy 3.2 mcu using the Arduino library of the Grove i2c motor driver. -> This is partly implemented (seediffbot_base/scripts/base_controller) Also replaceWire.hwith the improvedi2c_t3library.

- Test different global and local planners and add documentation

- Add

diffbot_mbfpackage usingmove_base_flex, the improved version ofmove_base.

To enable object detection or semantic segmentation with the RPi Camera the Raspberry Pi 4 B will be upated with a Google Coral USB Accelerator. Possible useful packages:

- Use the generic

teleop_twist_keyboardand/orteleop_twist_joypackage to drive the real robot and in simulation. - Playstation controller

vcstoolto simplify external dependency installation- Adding instructions how to use

rosdepto install required system dependencies

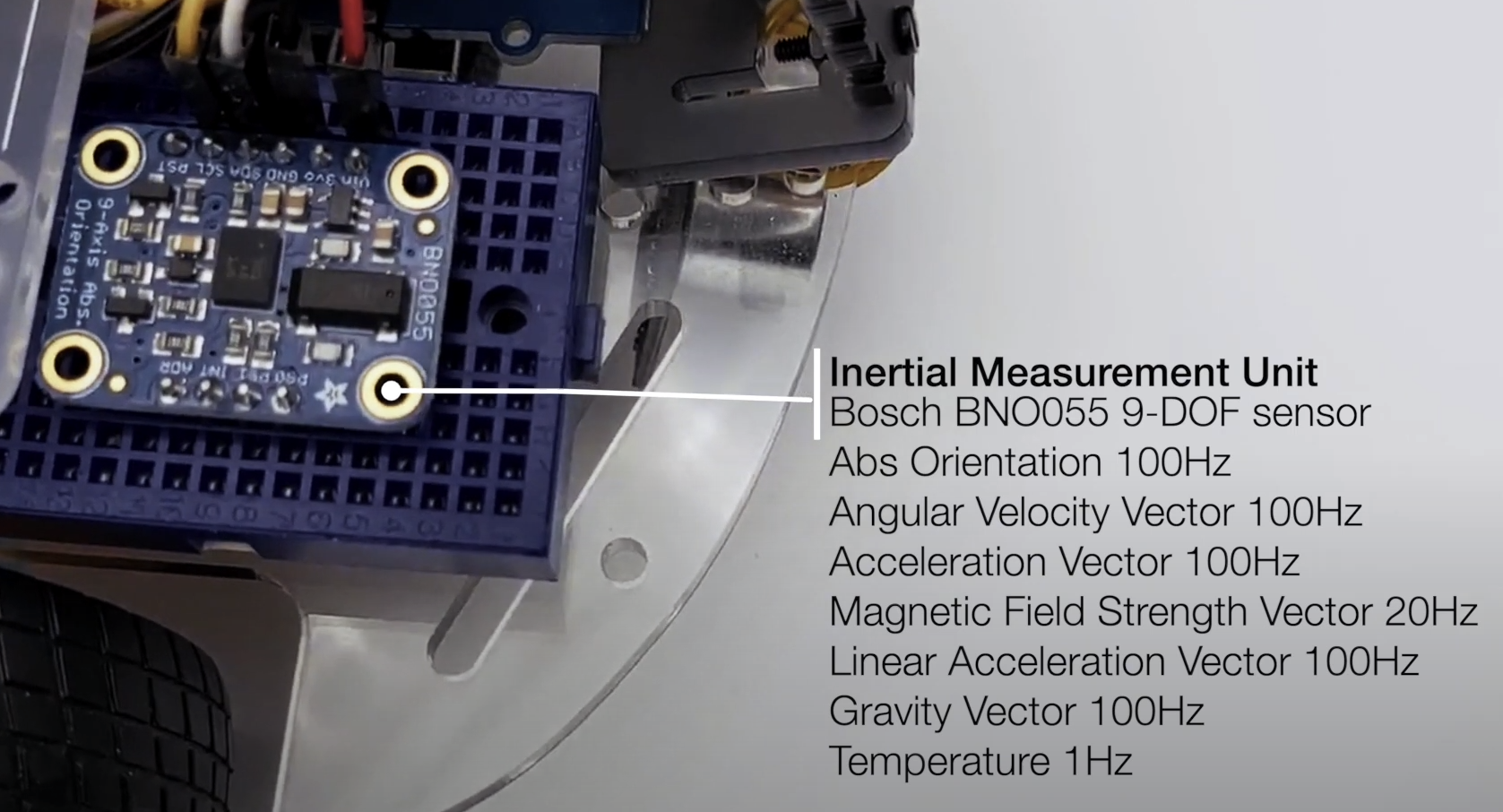

| SBC RPi 4B | MCU Teensy 3.2 | IMU Bosch |

|---|---|---|

|

|

|

| Part | Store |

|---|---|

| Raspberry Pi 4 B (4 Gb) | Amazon.com, Amazon.de |

| SanDisk 64 GB SD Card Class 10 | Amazon.com, Amazon.de |

| Robot Smart Chassis Kit | Amazon.com, Amazon.de |

| SLAMTEC RPLidar A2M8 (12 m) | Amazon.com, Amazon.de |

| Grove Ultrasonic Ranger | Amazon.com, Amazon.de |

| Raspi Camera Module V2, 8 MP, 1080p | Amazon.com, Amazon.de |

| Grove Motor Driver | seeedstudio.com, Amazon.de |

| I2C Hub | seeedstudio.com, Amazon.de |

| Teensy 4.0 or 3.2 | PJRC Teensy 4.0, PJRC Teensy 3.2 |

| Hobby Motor with Encoder - Metal Gear (DG01D-E) | Sparkfun |

| Part | Store |

|---|---|

| Raspberry Pi 4 B (4 Gb) | Amazon.com, Amazon.de |

| SanDisk 64 GB SD Card Class 10 | Amazon.com, Amazon.de |

| Remo Base | 3D printable, see remo_description |

| SLAMTEC RPLidar A2M8 (12 m) | Amazon.com, Amazon.de |

| Raspi Camera Module V2, 8 MP, 1080p | Amazon.com, Amazon.de |

| Adafruit DC Motor (+ Stepper) FeatherWing | adafruit.com, Amazon.de |

| Teensy 4.0 or 3.2 | PJRC Teensy 4.0, PJRC Teensy 3.2 |

| Hobby Motor with Encoder - Metal Gear (DG01D-E) | Sparkfun |

| Powerbank (e.g 15000 mAh) | Amazon.de This Powerbank from Goobay is close to the maximum possible size LxWxH: 135.5x70x18 mm) |

| Battery pack (for four or eight batteries) | Amazon.de |

| Part | Store |

|---|---|

| PicoScope 3000 Series Oscilloscope 2CH | Amazon.de |

| VOLTCRAFT PPS-16005 | Amazon.de |

| 3D Printer for Remo's parts | Prusa, Ultimaker, etc. or use a local print service or an online one such as Sculpteo |

- Louis Morandy-Rapiné for his great work on REMO robot and designing it in Fusion 360.

- Lentin Joseph and the participants of ROS Developer Learning Path

- The configurable

diffbot_descriptionusing yaml files (see ROS Wiki on xacro) is part ofmobile_robot_descriptionfrom @pxalcantara. - Thanks to @NestorDP for help with the meshes (similar to

littlebot), see also issue #1 dfki-ric/mir_roboteborghi10/my_ROS_mobile_robothusky- turtlebot3

- Linorobot

Your contributions are more than welcome. These can be in the form of raising issues, creating PRs to correct or add documentation and of course solving existing issues or adding new features.

diffbot is licenses under the BSD 3-Clause.

See also open-source-license-acknowledgements-and-third-party-copyrights.md.

The documentation is licensed differently,

visit its license text to learn more.